McAfee recently illuminated the alarming gap between the road test and real-world performance of autonomous vehicle sensors, when they were able to fool a Tesla car into accelerating to 85 mph in a 35mph zone using a piece of duct tape. This is not a problem confined to one model of vehicle; the MobileEye camera they tested is used in some 40 million vehicles and it is likely that there are many other latent vulnerabilities in autonomous vehicles. We have already seen accidents involving autonomous vehicles from different carmakers at various ranges of autonomy, from cruise control to the SAE’s Automation Level 4 or 5.

Autonomous vehicles on bumpy road to market

These vehicles have all been subjected to extensive and rigorous road tests. For example, Google’s Waymo autonomous vehicles have driven 20 million miles on public roads alone. So why do driverless cars continue to suffer from potentially dangerous defects that were not picked up during their ‘driving tests’?

The irony here is that real-world road tests don’t provide realistic validation of autonomous systems, because no two tests can replicate the same conditions. In a mathematical world, two plus two is always four, but in the real world no two situations are exactly equivalent. Even 70 miles driven on the same day will not expose a vehicle to the full range of conditions it may encounter in the same area on a different day. Similarly, no two days are the same; everything from pedestrian behaviour to traffic to weather conditions varies from one moment to the next. For example, a vehicle tested to perfection in daylight hours may not have accounted for the fact that the sun is in a different position at different times of the day, and this affects visibility. Relying on real-world road testing alone is simply unrealistic, because it would take hundreds of years for vehicles to be trained on, not just every road, but on every relevant variable that could affect safe performance on those roads.

As we saw with the Tesla vehicle ‘hack’, even extensive real-world road tests cannot cover so-called ‘edge cases’; unexpected scenarios such as people tampering with speed signs. A vehicle image-recognition system could be trained on every road sign in existence, but has it been trained on its ability to read the same road signs when they are obscured by fog? Like many machine-learning technologies, autonomous vehicle perception systems can also be ‘biased for success’, in that they are often trained in artificially perfect conditions, leading them to fail when confronted with real-world imperfections.

It is not possible to road-train an autonomous system to behave properly

This is why pioneering car technologies may appear to be safe and roadworthy, but this may not always be the case. When you read that an autonomous vehicle technology has exceeded however many million driverless miles – on the road, or virtually – keep in mind that this is by no means a metric for success. What matters when deciding that a car is safe and roadworthy is not how many miles it has covered, but how many ‘smart miles’ it has covered, and how many scenarios it was exposed to in those miles. Determining which scenarios are required to test the sensor perception, AI and more general vehicle design is now the big challenge we face as an industry to bring safe autonomous passenger vehicles to fruition.

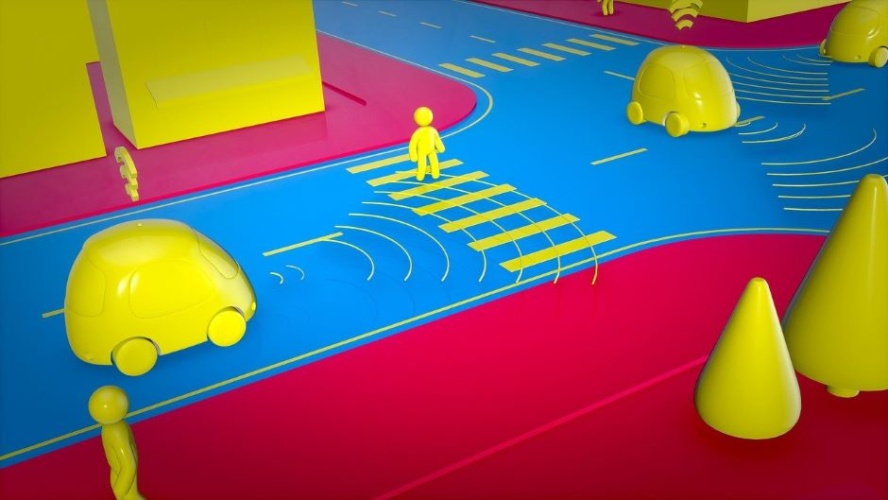

Leading companies from Audi to GM are addressing this challenge by using virtual test drives – simulated road environments for autonomous software systems – that can simultaneously test a car’s systems against thousands of variations in road conditions. This not only significantly cuts the cost of road testing but improves the safety of the vehicles once they roll onto the roads. Technologies from Scenario Testing to 3D Environment Modelling enable future cars to be tested against far more varied miles and a far broader spectrum of road hazards in less time, without any real-world safety risks.

Our VTD software, for example, which is used to test Advanced Driver Assistance Systems (ADAS) and autonomous driving systems, can be used to cover as many as 13 million km a day and simulate every possible driving condition including ‘black swan events’ such as total system faults and storms, and as part of automotive, electronics and software company’s test programmes to test and validate every aspect of autonomous driving.

Through our work with Singapore’s MPU Technical University, we are helping Singapore to achieve its mission to become the world’s first Smart Nation, which will include accommodating the widespread introduction of autonomous vehicles across all three levels: public, private and service transport. In addition to demanding an overhaul of their urban landscape, it will also require sophisticated autonomous systems that understand concepts such as fleet management and relative traffic priorities, like for emergency vehicles.

Simulation is key in these preparations. It is not possible to road-train an autonomous system to behave properly and safely in an environment that does not yet exist – a smart city. But, rather beautifully, designing this world in simulation and testing the systems there will then produce the means to make that world a reality.

Dr Luca Castignani, is Chief Automotive Strategist at MSC Software

Glasgow trial explores AR cues for autonomous road safety

They've ploughed into a few vulnerable road users in the past. Making that less likely will make it spectacularly easy to stop the traffic for...