A team of researchers from the University of Cambridge has developed two new systems to aid autonomous driving, which use a combination of simple smartphone technology and machine learning.

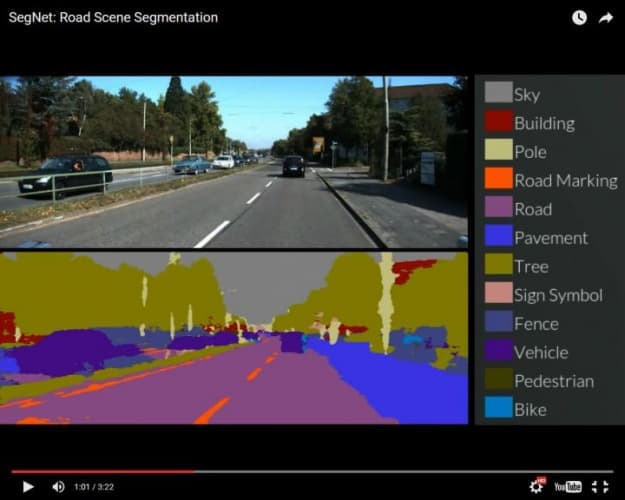

The first system, known as SegNet, takes an image of a street scene and sorts the components into 12 different categories, such as roads, street signs, pedestrians, buildings and cyclists. Using a base set of 5,000 images in which every pixel was labelled by a team of Cambridge undergraduates, SegNet learns by example, continually improving. According to the researchers, the system currently has a 90 per cent accuracy rate.

"It's remarkably good at recognising things in an image, because it's had so much practice," said Alex Kendall, a PhD student in Cambridge’s Department of Engineering. "However, there are a million knobs that we can turn to fine-tune the system so that it keeps getting better."

For now, SegNet’s capabilities are limited primarily to highway and urban environments, although testing has also been successfully undertaken in other conditions and landscapes. While it is not yet ready to control a vehicle, it could be used as an early warning system, and is also significantly cheaper than the sensor technology widely used in today’s autonomous cars and trucks.

The second system uses a single colour image taken from the vehicle to determine location and orientation. It does this by analysing the geometry of the scene and comparing it against an online database. According to the Cambridge team, the technology is more accurate than GPS, and has the added benefit of functioning indoors and in tunnels.

"It will take time before drivers can fully trust an autonomous car,” said Professor Roberto Cipolla, who led the research. “But the more effective and accurate we can make these technologies, the closer we are to the widespread adoption of driverless cars and other types of autonomous robotics."

Glasgow trial explores AR cues for autonomous road safety

They've ploughed into a few vulnerable road users in the past. Making that less likely will make it spectacularly easy to stop the traffic for...