Chemistry is simultaneously one of the most digital and analogue of the sciences. In digital terms, a reaction either works or it doesn’t: on or off. In analogue terms, the behaviour of elements and compounds, atoms and molecules, results from the behaviour and interaction of electrons; and this is covered by the inherently slippery rules of quantum mechanics. One result of this is that when describing and, more importantly, monitoring a chemical reaction, there are a great deal of variables that need to be taken into account: temperature, pressure, degree of mixing, transparency and colour of the reactants, and many others.

This is particularly relevant in the sector of small-molecule organic chemistry, where small tweaks in the starting conditions of reaction can have a very large influence on the outcome. Small-molecule organic is also the domain of big business: it’s where most pharmaceutical and agrochemical chemistry takes place, and controlling the reactions in this sector is the key to products whose sales are worth many millions.

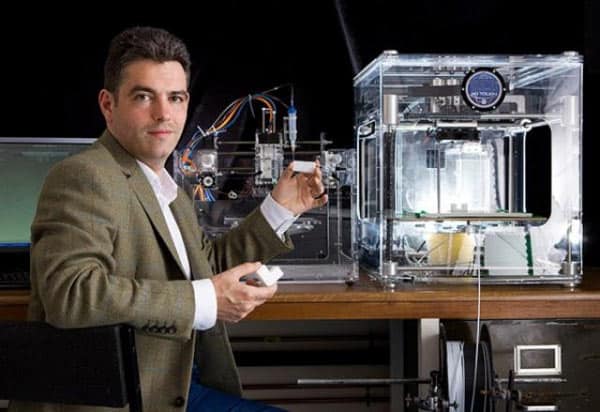

Research in this area is the province of Lee Cronin, Regius Professor of chemistry at Glasgow University. Cronin’s research, which we have covered in the news section of The Engineer over the past few years, focuses around digitising chemistry so that synthesis of compounds and, indeed, bulk materials can be completely automated: he calls the goal of this project a “chemputer”. To commercialise this, a spin out company called DeepMatter has been formed, with Cronin taking the role of founding academic director. At the helm as Chief Executive is another chemist, Mark Warne, who told The Engineer how he sees the company as not just a chemicals concern, but at its heart a Big Data company.

“The industrial problem that we're trying to solve is reproducibility, Warne explained. “If I take a scientific experiment from the literature, or from a patent, or from a third party, I've probably got about a one in four chance of being able to reproduce it, and that's probably a slightly conservative view. It's not that the stuff is wrong in the publications. The detail in which data is recorded is insufficient to enable reproducibility. It’s like a recipe book: I can give somebody a recipe for a cheese soufflé which worked for me, but they might end up with a ramekin full of brown gunk. Part of that is because of different understandings of terms in the instructions, like what does ‘fold’ actually mean? To relate it back to chemistry, the content of your starting materials, your reagents, do vary. And then of course in amongst all that you've got environmental factors, so were you in a room with sunshine pouring through it? And then finally, we record all the successful reactions, we don't record anything that fails. So Deep Matter's platform enables you to collect and structure that data in a more reproducible way than a traditional protocol or a traditional scientific publication. And secondly, it provides you with the same level of detail whether your reaction is successful or unsuccessful.”

This is done via software and hardware, Warne added. The software is cloud-based, so recipes (as the collections of data on each reaction are inevitably called) can be shared anywhere in the world, with the customer’s laboratory is in Bradford, Baltimore or Bangalore. “We have two bits of hardware,” Warne said. “ One of them is a way of integrating analytical equipment, lab based equipment, so that all those feeds are coming into your platform, so that you've got a lower burden to integrating the data together. secondly, for your glass reaction vessel, we have a bespoke device. We call it device X, for the sake of argument. And that bespoke device, allows you to collect things that are very familiar to a chemist, like temperature, and then feeds that are less familiar to a chemist, like video and sound. It's a data collection device that allows you to longitudinally stream data throughout a reaction, and at the end you're looking for the qualitative changes in your reaction, as well as quantitative ones. As a chemist, I now don't only have a measure of success or failure at the end of the reaction, but I know where in the reaction, in the elapsed time of my reaction, where the capricious moment was, or whatever it might be.

The business of DeepMatter at this stage of its development is to set up a repository of information on chemical reactions. “As I said,” Warne explained, “much of the information in the literature is not particularly helpful. But some of it is. If you’re building a library, you don’t throw out all of the old books and start from scratch. You start with the best of the old books. And that’s what we’re doing. We’ve made an acquisition of Infochem from the publisher Springer Nature. Infochem develops software to handle retrieval, structures and reactions for synthetic chemistry. We've gained a bunch of tools that we would've chosen to build or access in some form or other, but with an established group of users who trust the brand and are able to see the opportunity for growth with the new platform. One of our Deep Matter users could buy the software cloud access with the hardware connection to connect an integrated environment and an opportunity to create a seamless interim process for research and process chemistry. At the moment, research chemistry doesn’t translate well into process chemistry. It’s sort of thrown over the fence, so to speak, and the scale up from laboratory to process is often not smooth. So now with this big data package, we have a the idea of a master process record that allows you to go seamlessly from one to another with common framework and language. That means that, in principle, I, as a synthetic organic chemist, can give you as a technician, the ability to do the same quality chemistry.”

Nanogenerator consumes CO2 to generate electricity

Nice to see my my views being backed up by no less a figure than Sabine Hossenfelder https://youtu.be/QoJzs4fA4fo