The human fingertip has over 3,000 touch receptors that largely respond to pressure, enabling the manipulation of objects. This sense of touch is so far missing in dexterous prosthetics, leading to objects being dropped or crushed by a prosthetic hand.

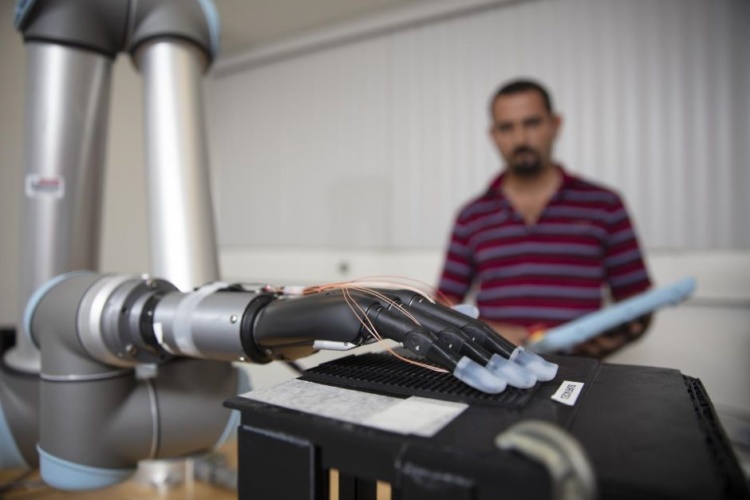

To enable a more natural feeling prosthetic hand interface, researchers from Florida Atlantic University's College of Engineering and Computer Science and collaborators said they are the first to incorporate stretchable tactile sensors using liquid metal on the fingertips of a prosthetic hand.

First prosthetic hand with tactile sensations successfully implanted

Sensor-instrumented e-glove improves prosthetic hand performance

Encapsulated within silicone-based elastomers, this technology is claimed to provide advantages over traditional sensors, including high conductivity, compliance, flexibility and stretchability. The team’s study is detailed in Sensors.

For the study researchers used individual fingertips on the prosthesis to distinguish between different speeds of a sliding motion along different textured surfaces. The four different textures had one variable parameter, which was the distance between the ridges.

To detect the textures and speeds, researchers trained four machine learning algorithms. For each of the ten surfaces, 20 trials were collected to test the ability of the machine learning algorithms to distinguish between the ten different complex surfaces comprised of randomly generated permutations of four different textures.

Results showed that the integration of tactile information from liquid metal sensors on four prosthetic hand fingertips simultaneously distinguished between complex, multi-textured surfaces. The machine learning algorithms were able to accurately distinguish between all the speeds with each finger. This new technology could improve the control of prosthetic hands and provide haptic feedback for amputees to reconnect a previously severed sense of touch.

"Significant research has been done on tactile sensors for artificial hands, but there is still a need for advances in lightweight, low-cost, robust multimodal tactile sensors," said Erik Engeberg, Ph.D., senior author, an associate professor in the Department of Ocean and Mechanical Engineering and a member of the FAU Stiles-Nicholson Brain Institute and the FAU Institute for Sensing and Embedded Network Systems Engineering (I-SENSE), who conducted the study with first author and Ph.D. student Moaed A. Abd.

"The tactile information from all the individual fingertips in our study provided the foundation for a higher hand-level of perception enabling the distinction between ten complex, multi-textured surfaces that would not have been possible using purely local information from an individual fingertip,” Engeberg added. “We believe that these tactile details could be useful in the future to afford a more realistic experience for prosthetic hand users through an advanced haptic display, which could enrich the amputee-prosthesis interface and prevent amputees from abandoning their prosthetic hand."

According to Florida Atlantic, the researchers compared four different machine learning algorithms for their classification capabilities: K-nearest neighbour (KNN), support vector machine (SVM), random forest (RF), and neural network (NN). The time-frequency features of the liquid metal sensors were extracted to train and test the machine learning algorithms. The NN generally performed the best at the speed and texture detection with a single finger and had a 99.2 per cent accuracy to distinguish between ten different multi-textured surfaces using four liquid metal sensors from four fingers simultaneously.

“With this latest technology from our research team, we are one step closer to providing people all over the world with a more natural prosthetic device that can 'feel' and respond to its environment," said Stella Batalama, Ph.D., dean, College of Engineering and Computer Science.

First seven members join NG’s Great Grid Partnership

Agreed. It is all pretentious posturing and trite branding with no meaning or gravitas. Prepare to be disappointed by all of these greats/grates.