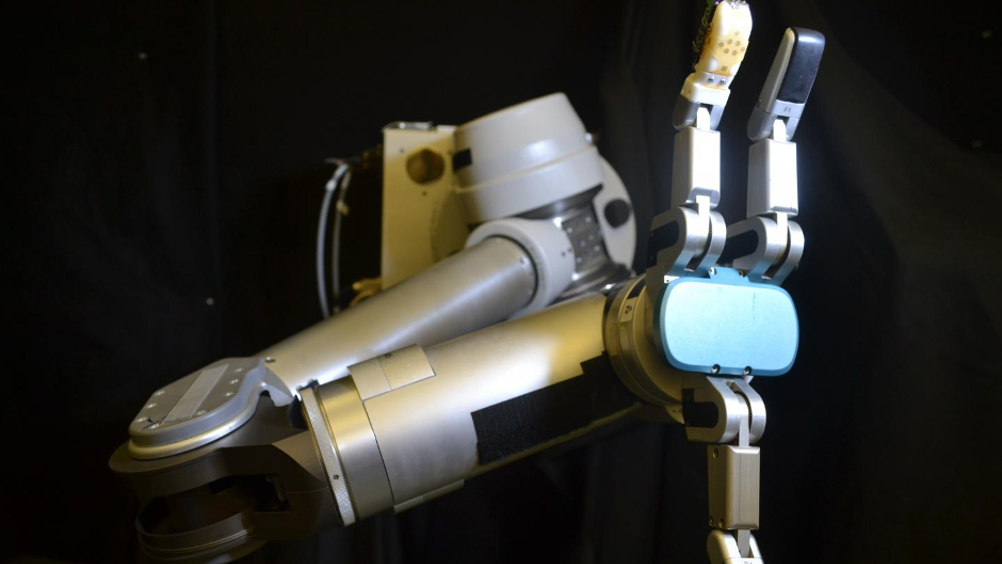

Flexible sensor skin gives robots a sense of dexterity

Robots could soon handle objects with the same dexterity as humans thanks to a flexible sensor skin developed by engineers from the University of Washington and UCLA.

The skin can be stretched over any part of a robot's body - or prosthetic - to accurately convey information about shear forces and vibration that are critical to grasping and manipulating objects.

The bio-inspired robot sensor skin mimics the way a human finger experiences tension and compression as it slides along a surface or distinguishes different textures. It measures this tactile information with similar precision as human skin and is described in a paper published in Sensors and Actuators A: Physical.

"Robotic and prosthetic hands are really based on visual cues right now - such as, 'Can I see my hand wrapped around this object?' or 'Is it touching this wire?' But that's obviously incomplete information," said senior author Jonathan Posner, a UW professor of mechanical engineering and of chemical engineering.

"If a robot is going to dismantle an improvised explosive device, it needs to know whether its hand is sliding along a wire or pulling on it. To hold on to a medical instrument, it needs to know if the object is slipping. This all requires the ability to sense shear force, which no other sensor skin has been able to do well," Posner said.

Register now to continue reading

Thanks for visiting The Engineer. You’ve now reached your monthly limit of news stories. Register for free to unlock unlimited access to all of our news coverage, as well as premium content including opinion, in-depth features and special reports.

Benefits of registering

-

In-depth insights and coverage of key emerging trends

-

Unrestricted access to special reports throughout the year

-

Daily technology news delivered straight to your inbox

Water Sector Talent Exodus Could Cripple The Sector

Maybe if things are essential for the running of a country and we want to pay a fair price we should be running these utilities on a not for profit...