The system, developed by researchers from North Carolina State University (NC State), the University of North Carolina and Arizona State University (ASU), is said to be the first to rely solely on reinforcement learning to tune the robotic prosthesis.

Robotic prosthetic knees need to be tuned to accommodate the people they are fitted to. According to NC State, the new tuning system tweaks 12 different control parameters, addressing prosthesis dynamics, such as joint stiffness, throughout the entire so-called gait cycle.

A human practitioner would normally work with the patient to modify a handful of parameters, but the process can take hours. The new system relies on a computer program that makes use of reinforcement learning to modify all 12 parameters, which allows patients to use a powered prosthetic knee to walk on a level surface in about 10 minutes.

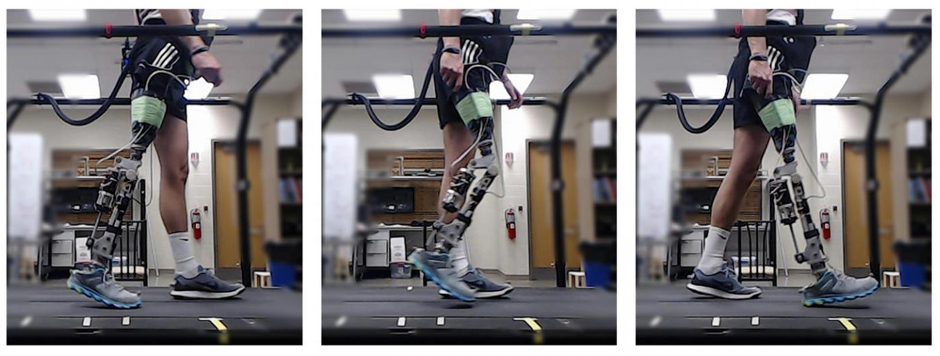

"We begin by giving a patient a powered prosthetic knee with a randomly selected set of parameters," said Helen Huang, co-author of a paper on the work and a professor in the Joint Department of Biomedical Engineering at NC State and UNC. "We then have the patient begin walking, under controlled circumstances.

"Data on the device and the patient's gait are collected via a suite of sensors in the device," Huang said. "A computer model adapts parameters on the device and compares the patient's gait to the profile of a normal walking gait in real time. The model can tell which parameter settings improve performance and which settings impair performance. Using reinforcement learning, the computational model can quickly identify the set of parameters that allows the patient to walk normally. Existing approaches, relying on trained clinicians, can take half a day."

While the work is currently done in a controlled, clinical setting, one goal would be to develop a wireless version of the system, which would allow users to continue fine-tuning the powered prosthesis parameters when being used in real-world environments.

"This work was done for scenarios in which a patient is walking on a level surface, but in principle, we could also develop reinforcement learning controllers for situations such as ascending or descending stairs," said Jennie Si, co-author of the paper and a professor of electrical, computer and energy engineering at ASU.

"I have worked on reinforcement learning from the dynamic system control perspective, which takes into account sensor noise, interference from the environment, and the demand of system safety and stability," Si said. "I recognised the unprecedented challenge of learning to control, in real time, a prosthetic device that is simultaneously affected by the human user. This is a co-adaptation problem that does not have a readily available solution from either classical control designs or the current, state-of-the-art reinforcement learning controlled robots. We are thrilled to find out that our reinforcement learning control algorithm actually did learn to make the prosthetic device work as part of a human body in such an exciting applications setting."

The researchers note that questions need to be addressed before it is available for widespread use.

"For example, the prosthesis tuning goal in this study is to meet normative knee motion in walking," Huang said. "We did not consider other gait performance [such as gait symmetry] or the user's preference. For another example, our tuning method can be used to fine-tune the device outside of the clinics and labs to make the system adaptive over time with the user's need. However, we need to ensure the safety in real-world use since errors in control might lead to stumbling and falls."

Poll: Should the UK’s railways be renationalised?

I think that a network inclusive of the vehicles on it would make sense. However it remains to be seen if there is any plan for it to be for the...