Britain’s contribution to digital technologies is not particularly well-known; they’re more associated with the US — the birthplace of the transistor and the home of many of the most influential hardware and software developers of recent years; or East Asia, which flooded markets with low-cost consumer electronics in the 80s and 90s. But Britain gave rise to important technologies, without which two of the most influential and pervasive aspects of the digital world — mobile telephony and computing and the success of the internet — would not exist. And innovations in digital technologies still abound in Britain, which may help computing to become even faster and advance our understanding about the most mysterious computer of all — the squishy organic one we all carry in our heads.

Of course, the invention of the world wide web by British computer scientist Tim Berners-Lee at CERN and his subsequent donation of the concept to the public domain is well-known. But what’s more obscure is that fibre-optic communications, the technology that allows the rapid, error-free transmission of vast quantities of data around the world, had its origin in the UK. "The Web and Internet are only possible because the cost of communicating is very low, and independent of distance,” said Richard Epworth, a member of the original team at Standard Telecommunications Laboratories (STL), where optical fibre was first developed in the 1960s.

Research into data transmission along optical fibre began because of the potential for low-loss communication offered by digital communication, Epworth told The Engineer. “The success of television led to a belief that in the coming decades — near-future — everyone would have a videophone, so there would be a need to transmit much more data,” he explained. “And there was a big pool of technically-skilled talent around — not necessarily graduates, but people who had worked in telecommunications, signalling and especially radar in the war, who were still young and looking for employment.”

Optical communication was a popular choice, because even then it was seen as being a good choice for digital technology, although not necessarily for electronics. “If you’re transmitting an analogue signal electrically, by manipulating a current along a wire, then as soon as you have any interference or loss of signal quality, you have problems; you don’t need much distortion at all before the signal becomes incomprehensible,” Epworth said. “But if you’re transmitting digitally, the signal is either on or off, and any distortion doesn’t matter at all.” It’s exactly the same idea that makes Morse code signalling was so successful, he added; all that matters is whether there is any signal or not; and visible light Morse works at the speed of light. What fibre optics promised, he said, was simply increasing the range of the visible light Morse idea and sending it around corners, plus the increase in speed compared with electrical signaling through copper. “The invention of the laser increased interest, and transmission through free-space was studied, but it’s too affected by weather, so some sort of guide for the light was obviously needed.”

The principle underlying fibre optics was first demonstrated in 1842 in Paris, when Daniel Colodon and Jacques Babinet showed that light could be ‘bent’ inside a stream of water falling from a horizontal spout; the shallow angle of the light striking the interface between water and air caused it to be reflected inside the curved stream of water. This technique was used with glass fibres to provide illumination for dentistry and other medical examinations as early as the 1920s. This approach wasn’t investigated seriously initially, Epworth said, because the glass attenuated the signal too much.

Harlow-based STL was one of several research organisations around the world trying to make optical communication work in the 60s, Epworth said, and the field was wide-open with many avenues being explored. Initial research took the term ‘light-pipe’ seriously — it used a hollow, air-filled tube as the transmission medium. “We also did a lot of work around planar thin-films, and with using optical waveguides where the majority of the signal would actually be outside the transmission medium,” Epworth said. “And for a while, it looked like the winning technique would be one using microwaves.”

The breakthrough came courtesy of a researcher from Hong Kong, Charles Kuen Kao, who, working with microwave expert George Hockham, theorized that a purer glass than was currently available would be suitable for visible light transmission; his key realization was hat it was the purity of the material that was the problem, rather than the fundamental physics. Kao and Hockham’s paper on the light transmission in a cladded glass fibre substrate, published 50 years ago this year in the Proceedings of the IEEE, is recognized as the beginning of practical optical fibres, and was instrumental in wining the pair a share in the 2009 Nobel Prize for Physics.

Subsequently Kao toured the world trying to get other institutions interested in the technology, but it wasn’t until Bell Labs succeeded in synthesising a very pure, ultra-transparent silica from elemental silicon and oxygen that it was taken seriously commercially. The UK retained involvement in the early application of the technology, setting up the first transmission system between Hitchin and Stevenage, but the fact that it was a multi-national project, and that no commercial remnant of STL exists, means that its status as a UK innovation has slipped from the public consciousness, Epworth believes. With Kao now suffering from advanced Alzheimer’s disease, Epworth is keen to bring him and his work back to public knowledge.

Another major digital innovation is more in the pubic eye owing to commercial machinations. The RISC (reduced instruction set computing) microprocessor was developed by ARM (Acorn RISC machines), by a team led by Stephen Furber. Talking to The Engineer in 2010, Furber explained that at the time, chip-making was dominated by American giants such as IBM, which had very set ideas on how chip architecture should work. The ARM team’s key breakthrough was in realising that they needn’t be bound by these ideas and could design a chip without manufacturing it themselves. The result was a processor that consumed much less power than its competitors, an advantage that has seen its successors dominate the market for mobile phones, tablets and laptops. This success recently saw ARM bought by Japanese firm Softbank for $42billion, which remains controversial.

The work of the optical fibre pioneers continues through optical computing research, which uses light and quantum states to design processing systems that function t he speed of light. Optalsys, a Cambridge-based spin-out, is using diffraction, low-power lasers, liquid crystals and similar data processing techniques to those used in Computational Fluid Dynamics to make a computer that works at exaFlop speeds (a billion billion calculations per second), and expects to unveil its prototype this year.

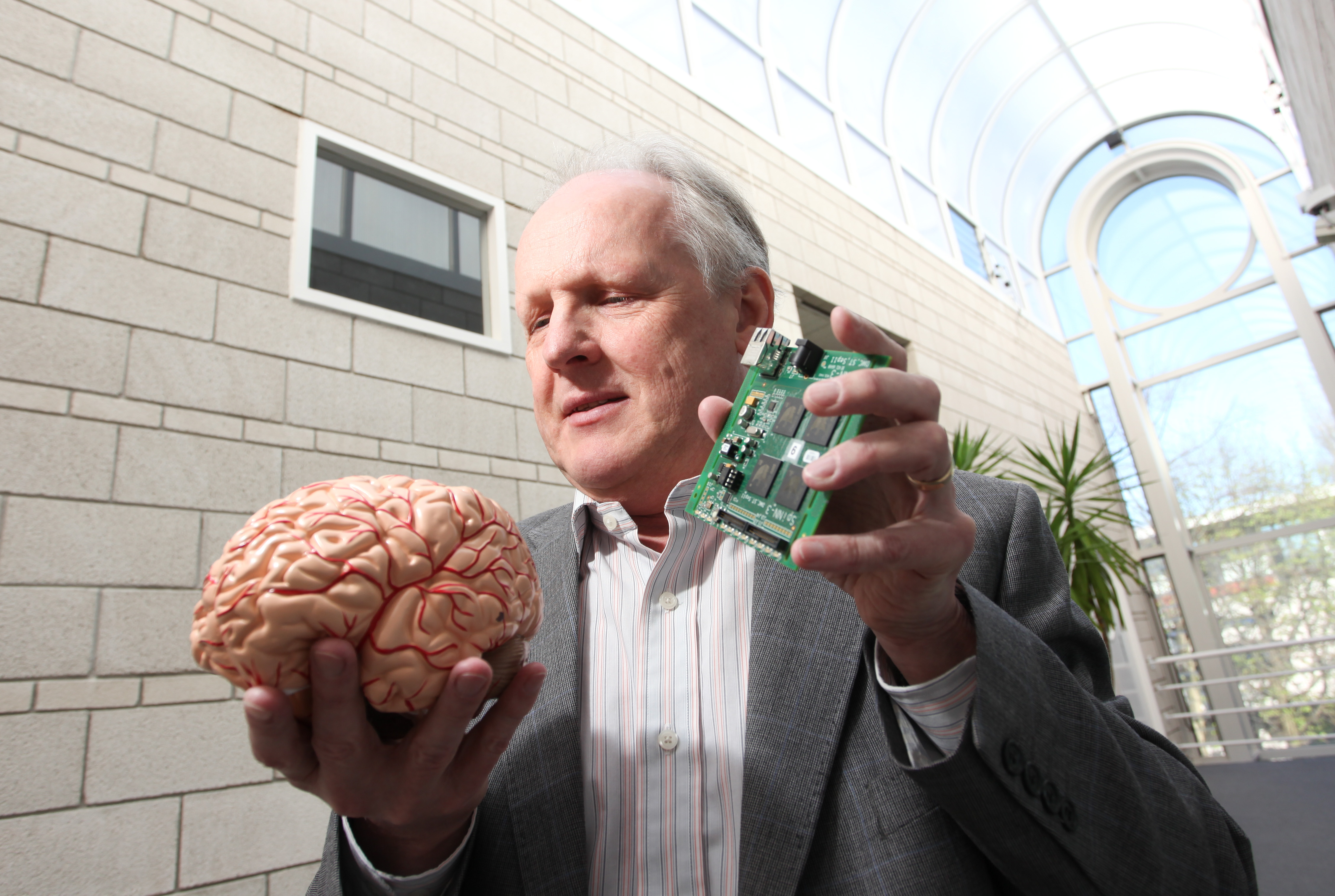

Meanwhile, Furber is using arrays of RISC chips (up to a million in total) in a project to simulate the calculating operations of the human brain aiming to build silicon-based systems that can use fuzzy logic in the same way as neurons. The goal of the Spinnaker (spiking neural network architecture) project at Manchester University aims to bridge the gap between the understanding of neurons and that of the whole brain. One outcome of this might be new treatments for Alzheimer’s disease, along with more advanced and capable but lower power, brains for robots.

Poll: Should the UK’s railways be renationalised?

Presumably the political fallout would be that the rail users would complain that they were being taxed unfairly to subsidise the people going about...