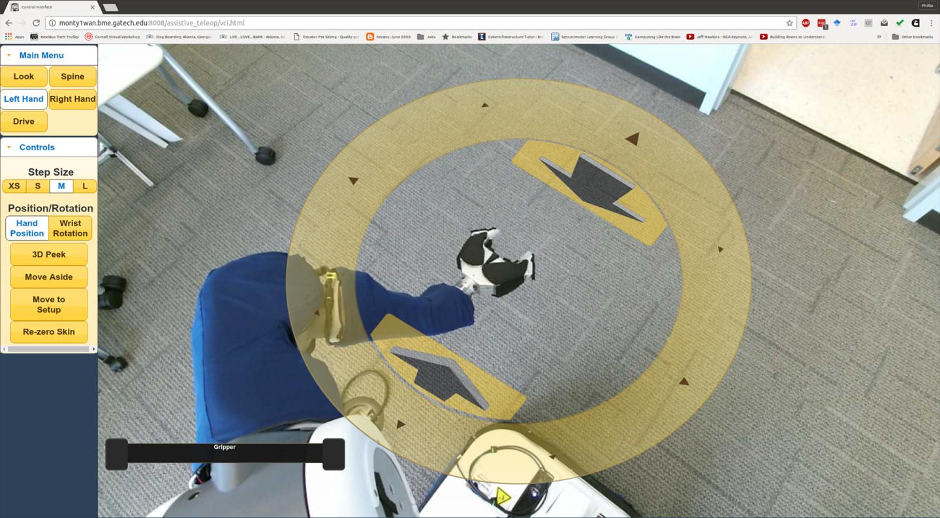

The web-based interface is said to display a "robot's eye view" of surroundings to help users interact with the world through the machine.

The system could help make sophisticated robots more useful to people who do not have experience operating complex robotic systems.

Study participants interacted with the robot interface using standard assistive computer access technologies - such as eye trackers and head trackers - that they were already using to control their PCs.

A paper published in PLOS ONE reported on two studies showing how such "robotic body surrogates" - which can perform tasks similar to those of humans - could improve the quality of life for users. The work could provide a foundation for developing faster and more capable assistive robots.

"We have taken the first step toward making it possible for someone to purchase an appropriate type of robot, have it in their home and derive real benefit from it," said Phillip Grice, a recent Georgia Institute of Technology PhD graduate who is first author of the paper.

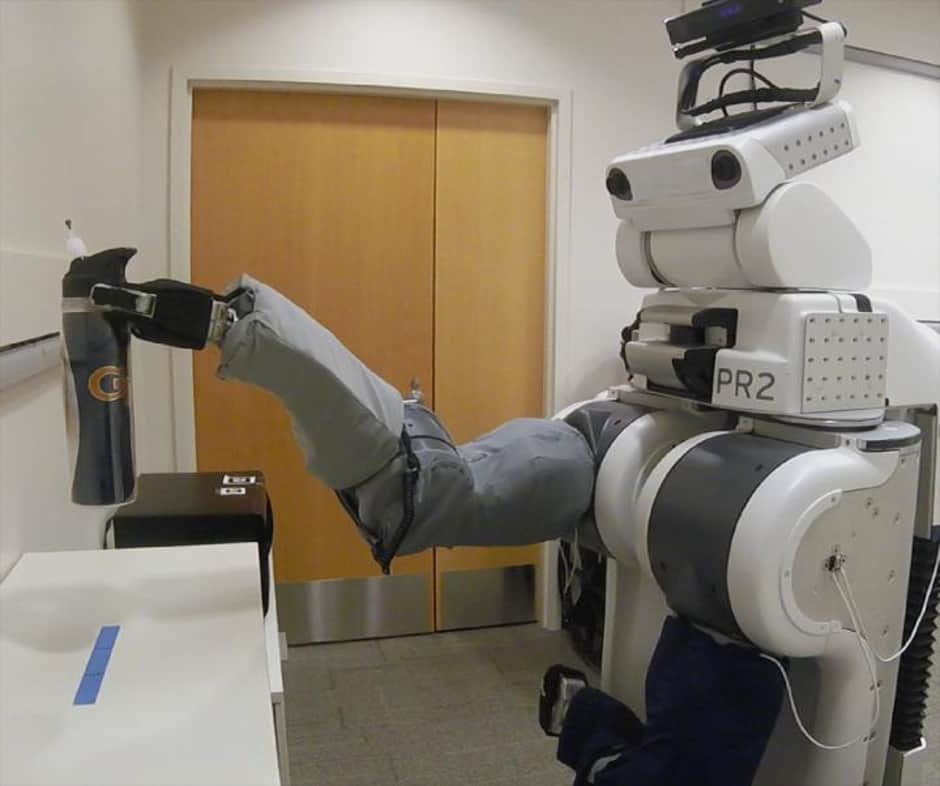

Grice and Prof Charlie Kemp from the Wallace H Coulter Department of Biomedical Engineering at Georgia Tech and Emory University used a PR2 mobile manipulator manufactured by Willow Garage for the two studies. The wheeled robot has 20 degrees of freedom, with two arms and a "head," giving it the ability to manipulate objects including water bottles, flannels, hairbrushes and even an electric shaver.

In their first study, Grice and Kemp made the PR2 available across the internet to a group of 15 participants with severe motor impairments. The participants learned to control the robot remotely, using their own assistive equipment to operate a mouse cursor to perform a personal care task. Eighty per cent of the participants were able to manipulate the robot to pick up a water bottle and bring it to the mouth of a mannequin.

In the second study, the researchers provided the PR2 and interface system to Henry Evans, a California man with very limited control of his body following a stroke.

Over seven days, Evans completed tasks and devised novel uses combining the operation of both robot arms simultaneously - using one arm to control a washcloth and the other to use a brush.

"A lot of the assistive technology available today is designed for very specific purposes,” said Grice. “What Henry has shown is that this system is powerful in providing assistance and empowering users. The opportunities for this are potentially very broad."

The interface allowed Evans to care for himself in bed over an extended period of time. "The most helpful aspect of the interface system was that I could operate the robot completely independently, with only small head movements using an extremely intuitive graphical user interface," Evans said.

The web-based interface shows users what the world looks like from cameras located in the robot's head. Clickable controls overlaid on the view allow the users to move the robot around in a home or other environment and control the robot's hands and arms. When users move the robot's head, for instance, the screen displays the mouse cursor as a pair of eyeballs to show where the robot will look when the user clicks. Clicking on a disc surrounding the robotic hands allows users to select a motion. While driving the robot around a room, lines following the cursor on the interface indicate the direction it will travel.

Building the interface around the actions of a simple single-button mouse allows people with a range of disabilities to use the interface without lengthy training sessions.

Poll: Should the UK’s railways be renationalised?

Well that goes both ways, doesn't it? I mean internal combustion drivers are already paying about 59p in the £ (+ the standard rate of VAT) on fuel....