Shadows help robot AI gauge human touch

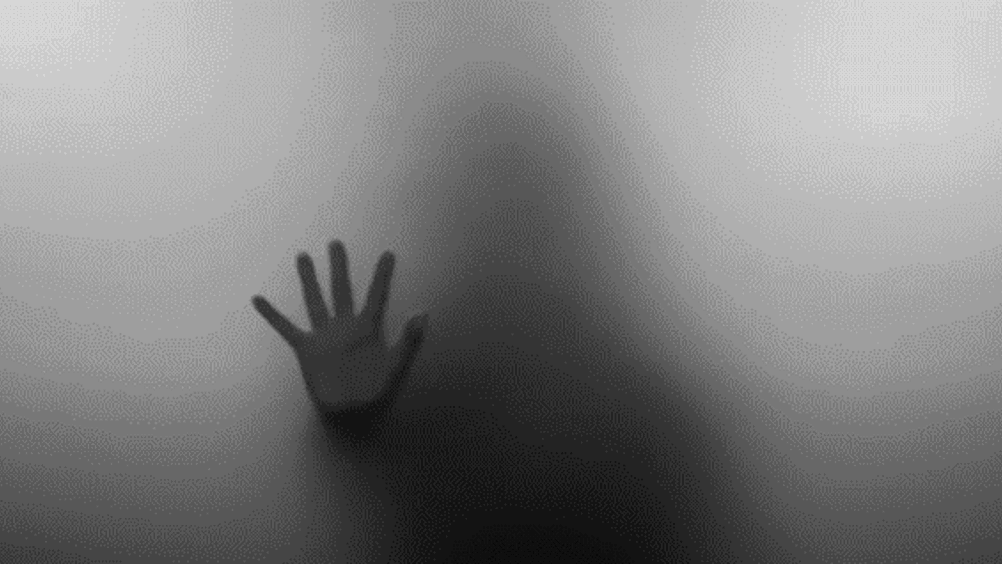

Researchers in the US have equipped a robot with an AI-driven vision system that enables it to recognise different types of touch via shadows.

The team from Cornell University installed a USB camera inside a soft, deformable robot that captures the shadow movements of hand gestures on the robot's skin and classifies them using machine-learning software. Known as ShadowSense, the technology evolved from a project to create inflatable robots that could help guide people to safety during emergency evacuations, for example through a smoke-filled building, where the robot could detect the touch of a hand and lead the person to an exit.

C2I 2020 Wildcard winner: Magic touch

New technology sends information through human touch

Rather than installing a large number of contact sensors, which would add weight, complex wiring and would be difficult to embed in a deforming skin, the Cornell team took a counterintuitive approach and instead looked to computer vision.

Register now to continue reading

Thanks for visiting The Engineer. You’ve now reached your monthly limit of news stories. Register for free to unlock unlimited access to all of our news coverage, as well as premium content including opinion, in-depth features and special reports.

Benefits of registering

-

In-depth insights and coverage of key emerging trends

-

Unrestricted access to special reports throughout the year

-

Daily technology news delivered straight to your inbox

Water Sector Talent Exodus Could Cripple The Sector

Maybe if things are essential for the running of a country and we want to pay a fair price we should be running these utilities on a not for profit...